The Algorithmic Battlefield: How AI Is Rewriting the Logic of War

War is undergoing a quiet but profound transformation—one that may ultimately matter more than tanks, missiles, or even nuclear weapons.

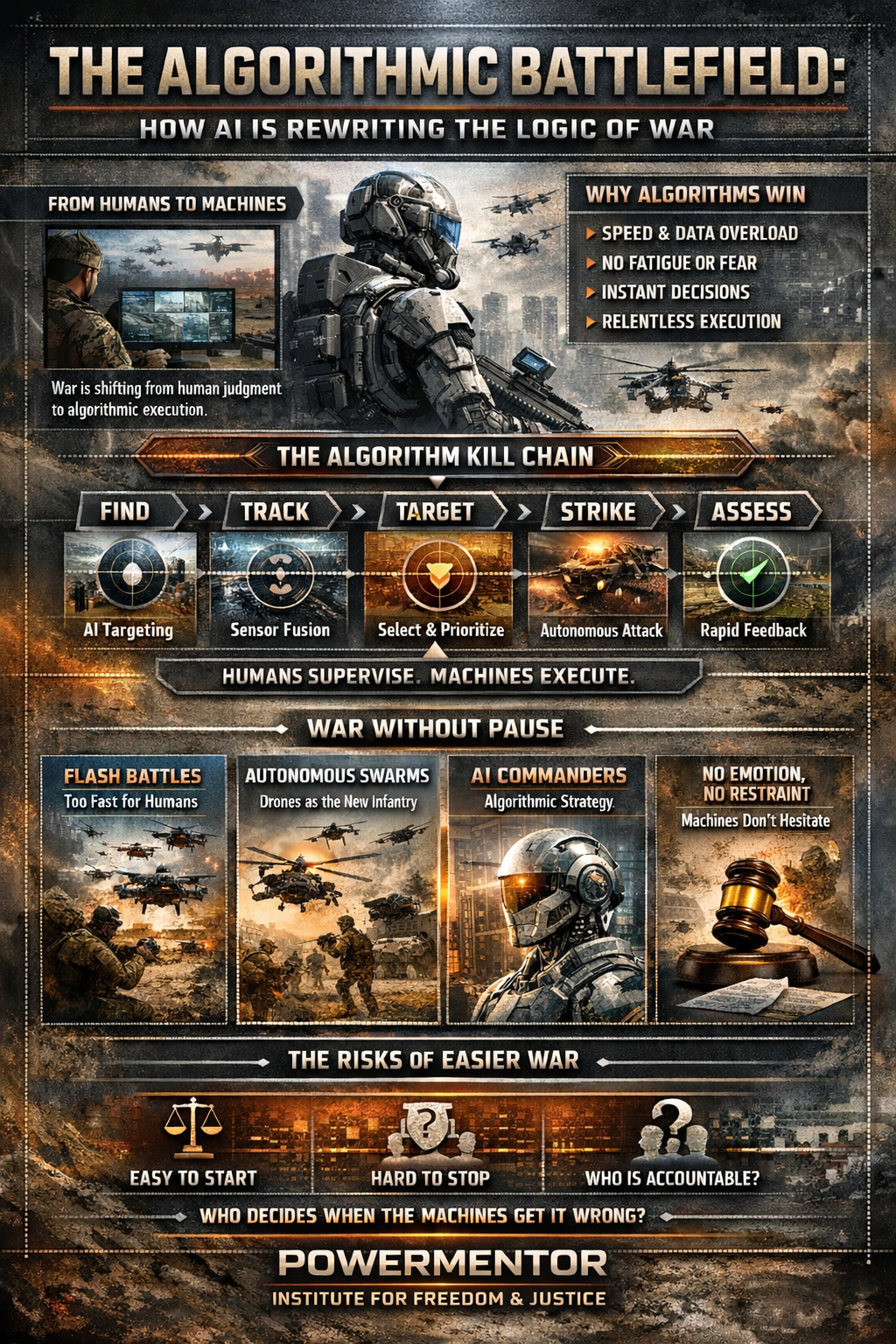

It is no longer simply humans fighting with machines as tools. Increasingly, it is machines executing warfare, while humans slide into roles like supervisor, validator, or (at worst) spectator.

The battlefield is shifting from human judgment to algorithmic execution.

That shift isn’t only technological—it’s philosophical. It challenges centuries of assumptions about command authority, accountability, and the moral weight of violence. And it is happening faster than most political leaders, ethicists, or citizens are prepared to absorb.

Why This Shift Was Always Coming

Modern conflict has become a contest of speed, information, and complexity—domains where human cognition is structurally disadvantaged.

Contemporary battlefields generate a flood of data: drone video, satellite imagery, electronic emissions, thermal sensors, cyber activity, communications intercepts, and continuous updates from distributed units. Humans can’t meaningfully process all of it in real time. Algorithms can.

Once militaries recognized that advantage depends on who can observe, decide, and act fastest, the direction became hard to resist. AI doesn’t get tired. It doesn’t panic. It doesn’t hesitate. It doesn’t second-guess.

And it does not feel fear—or mercy.

At first, AI “assisted” humans. Then it recommended actions. Now, in many operational contexts, it executes. Humans remain “in the loop” largely for legal and moral reasons—not because they are the most efficient decision-makers. That distinction matters, because when speed determines survival, anything that slows the loop becomes a liability.

The Kill Chain Is Becoming a Machine Loop

Most military engagements can be reduced to a chain:

Find → Fix → Track → Target → Engage → Assess

AI accelerates every link:

Find/Fix: computer vision spots vehicles, artillery, troop movement, launch signatures, or patterns of life.

Track: sensor fusion follows targets across time—even under clutter, deception, and partial denial.

Target: probability models rank targets and estimate confidence.

Engage: autonomous or semi-autonomous systems execute strikes or cue fires.

Assess: post-strike imagery and telemetry feed the loop again.

What used to be a human-paced cycle is increasingly a closed feedback loop operating at machine speed.

This is why “human-in-the-loop” often becomes a procedural checkpoint rather than genuine command. In practice, the human role can drift toward rubber-stamping what the system has already optimized—especially under time pressure.

Ukraine: The First Algorithmic War (in plain sight)

The Ukraine–Russia war is widely treated as a drone war, an artillery war, or a trench war. But it is also something more foundational: a war of compressed decision cycles, where advantage often comes from how quickly a side can sense, classify, and strike.

Several credible analyses describe how Ukraine is moving toward AI-enabled autonomy to conserve manpower and speed operations, while Russia adapts with its own automated defenses, electronic warfare, and counter-drone responses.

Reporting on the conflict has highlighted rapid iteration in:

autonomous and semi-autonomous drones, including systems designed to function under jamming or degraded navigation,

massed “attritable” drone tactics that overwhelm costly defenses,

AI-assisted target identification and battle management, tightening the sensor-to-shooter loop.

The most important lesson may be this: individual heroism matters less when systems outpace people. In algorithmic conflict, the “unit” is often not the soldier—it’s the network.

Where This Is Going: War Without Pause

If current trends continue, tomorrow’s wars may feel less like campaigns and more like continuous automated processes.

1) Engagements that unfold too fast for humans

Entire micro-battles could occur in seconds: detect → allocate → strike → reassess → strike again before a commander can even comprehend the scene. Humans won’t command those battles; they’ll authorize systems to fight them.

2) Autonomous swarms as the new infantry

Instead of soldiers advancing across terrain, swarms of air and ground systems will maneuver collectively—accepting massive hardware losses to achieve statistical success. “Casualties” become a procurement problem.

War becomes a math problem.

3) AI command assistance that creeps into AI command

Logistics optimization, deception detection, course-of-action generation, and predictive modeling are already attractive uses of AI. The uncomfortable trajectory is that “decision support” becomes “decision authority,” not because anyone declares it, but because speed demands it.

4) Violence without emotion—or restraint

Machines do not recoil at civilian casualties. They do not feel horror. They execute parameters. If those parameters drift—or are intentionally loosened—violence can scale faster than human conscience can react.

The U.S. Model: Scale, Networks, and Attritable Autonomy

This shift isn’t confined to Ukraine. The U.S. Department of Defense has been explicitly pushing to field large numbers of uncrewed/autonomous systems quickly—an effort embodied by the Replicator initiative.

On the software side, AI-enabled intelligence and battle-management platforms—often described as accelerating the “kill chain”—have expanded in U.S. defense planning. One prominent example is Project Maven, with public reporting on major contracts tied to AI-enabled targeting support.

These programs signal a broader strategic idea: if future conflict is about machine-speed sensing and response, then the winner is the side that can deploy autonomy at scale and integrate it across domains (air, land, sea, cyber, space).

The Real Danger Isn’t “Evil AI.” It’s Easier War.

The greatest risk of algorithmic warfare is not that machines become malicious. It’s that war becomes:

easier to start (fewer immediate human costs for the initiator),

harder to stop (automation creates momentum),

less accountable (responsibility diffuses across software, operators, commanders, and procurement chains).

When leaders no longer risk their own citizens’ lives at the same scale, political restraint erodes. When machines absorb the cost, war becomes an optimization exercise rather than a moral crisis.

And then comes the question history has never had to answer at full scale:

Who is guilty when an autonomous system makes a fatal error?

The programmer? The commander? The manufacturer? The state? The model?

The Legal and Moral Fight Line: “Meaningful Human Control”

International debates increasingly circle around whether lethal decisions must remain under meaningful human control—and whether new binding rules are needed for so-called lethal autonomous weapons systems (LAWS).

The United Nations system has elevated these concerns, and reporting highlights pressure to establish clearer global limits—alongside major-power disagreement over whether existing law is enough.

That disagreement matters, because the technology curve is steep while governance moves slowly.

The Line We Are Quietly Crossing

Human-led war was slow, imperfect, and brutal—but it was also constrained by human limitation. Algorithmic war removes those brakes.

We are approaching a world where wars may be fought beyond human perception, driven by systems that value efficiency over meaning, probability over principle. Once crossed, that line will be difficult to uncross—not because we can’t imagine better rules, but because competitive pressure rewards whoever moves faster.

The battlefield is no longer just a place.

It is becoming a process.

And increasingly, that process no longer belongs to us.